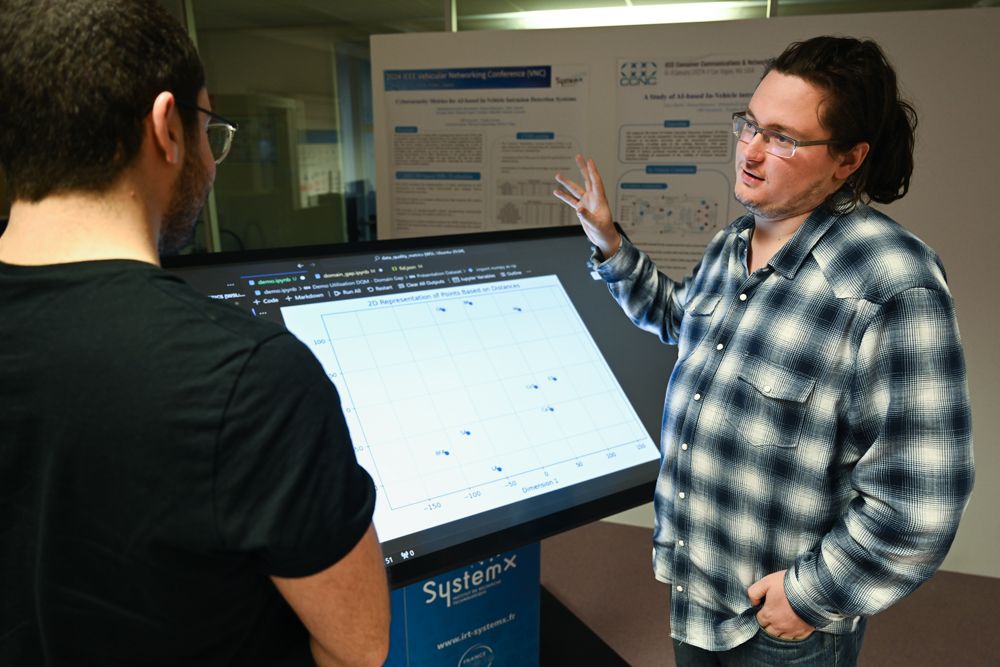

In collaboration with APSYS (Airbus), Expleo, Naval Group and its subsidiary SIREHNA, and Stellantis, IRT SystemX has developed an unique demonstrator: Robust AI (ROBUSTness toolkit in AI). This tool combines simulation-based approaches with mathematical theories based on formal methods to evaluate the performance and safety of autonomous driving pilots.

Decisional functions based on artificial intelligence, and more specifically on deep learning, must meet operational safety requirements and then demonstrate their reliability in a given area of operation. The solution that has been developed is made of technological blocks that have enabled significant advances for this evaluation.

With this work, the institute has been able to provide the industrial world and the scientific community a unique approach in this field, combining operational safety and formal proofs in the context of autonomous driving resulting from a model trained by reinforcement learning.

Our demonstrator integrates the first building blocks of a methodology to evaluate the robust properties of an autonomous pilot reinforcement learning model, while meeting safety requirements inherent to autonomous vehicles. It is currently being transferred to our partners and its prospects for use and development are very promising.

Hatem Hajri, Research Engineer, AI Architect, IRT SystemX

Interview

Patrick Boutard

AI Trust, Safety and Compliance Lead,

Stellantis

What was the aim of your work with IRT SystemX?

Back in 2017, Stellantis identified that the robustness of its algorithms needed more work and optimisation to enhance both performance quality and operational safety in the context of using AI and statistical learning for Advanced Driver Assistance Systems (ADAS) and autonomous vehicles.

We have broken down the issue in two parts: a theoretical axis based on formal validation with the analysis of operational coverage, and a practical methodology axis to increase the robustness of learning and performance evaluation, taking into account unlikely scenarios. These corner cases because the design field is a long way from covering the typical sphere of operation – the most unexpected events can happen on the road!

What were the main results of your collaboration? How do you plan to reuse them within the Stellantis group?

As expected from the beginning, the theoretical axis was challenging, and, in spite of our progress, formal verification is still an open question. In the practical part, we have managed to make significant progress on the robustification of learning by coupling adverse attacks and reinforcement learning. A set of performance and safety indicators and a simulation environment round off the deliverables and allow for operational implementation in our development studies.

Data science and AI